Web Sustainability in Development

Building our web solutions with the future of the people, animals, our planet, and values in mind.

Intro

Making your web solution more green or web sustainable with a low carbon footprint can be complex and challenging. It's not only about choosing a hosting provider who uses renewable energy, although it's an important step - it's the whole setup. Throughout the years of continuous improvements, we've learned on how to approach a build from hosting to backend and frontend technologies to be optimal, consume the least amount of energy and in essence - GREEN.

The Proof

Before digging into the details, let's prove that Innocode is able to deliver. We have a WordPress site example that have recently been updated and tested using the Website Carbon Calculator:

Brød & Korn - a great source for flavorful and scrumptious recipes pack full of images. We've optimized the whole site and is now cleaner than 87% of web pages tested.

This web page emits the amount of carbon that 1 tree absorbs in a year. Pretty, pretty good.

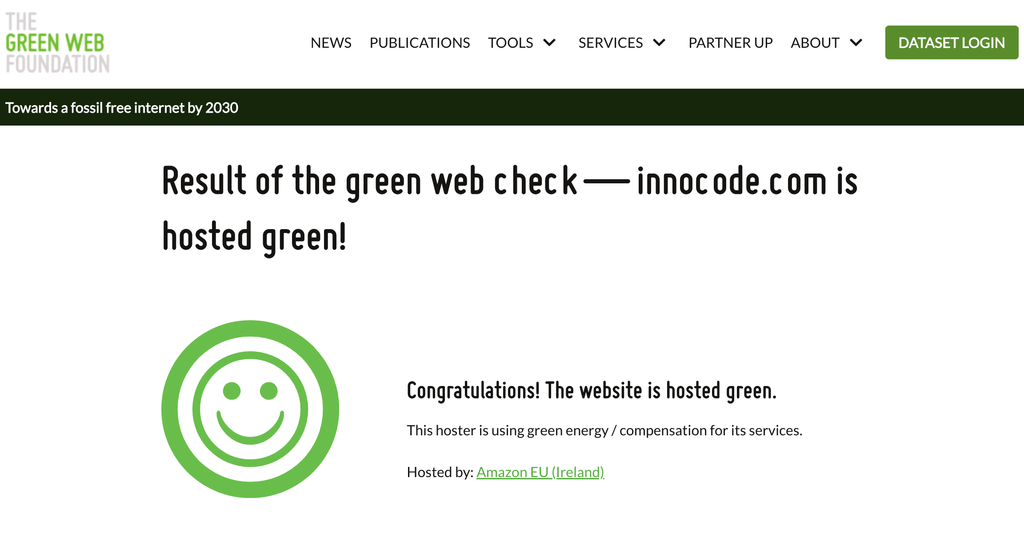

Before optimizing our own site, Innocode.com, was dirtier than 58% of sites tested and now it is cleaner than 80%!

Hosting

First, you should ask yourself: Do we need a server? It depends on the nature of your content, it could be static or dynamically changed. In cases when content is static where content is pretty much standard and will not change frequently, it could be much more efficient to host site just as HTML pages on services like Cloudflare, Amazon S3, Netlify. Examples of such sites would include reports, landing pages, simple portfolio, CV, etc.

Otherwise, if you'd like to continuously change and update your content then the site would become more complex. You need to think about the database, server environment, caching, etc. There are a lot of hosting providers in The Green Web Foundation directory to choose from. Most of our clients' web solutions fall under complex builds and our choice is AWS together with Cloudflare.

Once this first step is done and your environment is defined, let's look into how we setup our backend and frontend methods.

Backend

Your backend should be fast as much as possible. To reach this goal we need to think about optimizing queries including the database and requests to external services.

Database setup and size

When we are talking about the database then WordPress has it's own core tables, meaning that for most of your projects, you do not need to worry about creating custom tables and how to set indexes. However, we highly recommend to follow some rules when retrieving data from the database, such as:

- Avoiding queries by meta values when possible as it does not have indexes. Instead try to use taxonomies or create custom tables for more complex cases.

- Use object caching to store results of heavy queries with proper TTL (time to live) which suits task logic and remember to flush on content update.

The number of requests to database significantly decreased by using persistent object caching because results of queries are saved between requests. WordPress does not force to use this by default but we prefer to enable it and our choice here is Redis (an alternative option could be Memcached).

Another challenge is to keep the database size to be at a minimum. A few key areas to consider:

- Comments - Disable comments functionality if it's not used or needed to block spam.

- Posts' versions - Reduce number of revisions by default and increase when it really does make sense. We usually set this value to 15 and for most of our clients it's enough. However we do not recommend to completely disable this feature as it's always an important option to have the possibility to revert content to previous versions.

- Autosaving - By default, WordPress performs autosave every minute but we changed this interval to 3 minutes without any complaints from the editorial staff. However, we do not recommend to disable autosaving as it's a good fallback option for situations when the internet connection gets lost or the browser is closed accidentally.

- Trash - Also it's possible to control the period of how many days the deleted posts will be in Trash. We use the default value from core which is 30 days and gives ample time to reconsider if a deleted post could be relevant again.

External Services - APIs and Plugins

Besides database queries, another common task is requesting external APIs. Of course, we are limited with provider's specification and requirements when we're integrating solutions. However, before implementing anything, check their documentation a bit more closely and not only for examples but for the possibility of minimizing the amount of data we want to retrieve by specifying e.g. fields parameter. Also remember to control quantity of items in the list.

In cases of when we know details of how and how often data is being updated on other end then it's possible to set caching and save results to WordPress transients with proper TTL. Set correct timeouts when integrating with 3rd party providers as we can not be sure about stability of external services. For serious performance issues or even downtime, your solution can struggle as well.

At Innocode, we are always careful in dealing with adding new dependencies to a project and keep in mind that besides security issues, it can lead to performance problems and database bloat, add some external calls, increase response time and carbon footprint. We almost never use 3rd party developed themes and prefer to use only well-known plugins for particular tasks or features like Yoast for SEO and WooCommerce for eCommerce.

Plugins could add new tables to database, new settings to options table, new post types etc. but not all of them have a tool to clear database when you want to remove their plugin. Remember that after the plugin has been deleted then we should check the database and verify that all is cleared.

Always monitor your applications. We use NewRelic as our monitoring tool to be able to quickly react on performance issues, it could be related to recent dependencies update including WordPress core or issues with external API etc.

Cache

In this last section, let's discuss page caching. This type of cache can be a major impact on your application performance and the number of carbon footprint because user's or bot's requests will not initiate database queries or external APIs calls but send ready HTML response from storage. However, it's highly depends on project requirements and not all requests could be cached, for example personalized data should be retrieved directly but it's possible to send all common data from cache and then retrieve other data with REST API calls or GraphQL.

We are not fans of well known solutions like WP Super Cache, W3 Total Cache, although they're still good solutions when properly configured. Instead of these plugins, we are using Cloudflare HTML cache or APO when project domain is proxying through this service or reuse already existing solutions. As mentioned above, we have persistent object cache, so it was decided to use Redis as well for page caching on most of projects. For this purpose we are installing Batcache plugin with our own add-on for more flexible flushing on content update.

Frontend

We don't always have control over designs. We usually do the web designs for our clients but there are a few who work with an external design partner. We certainly do not want to limit designers' creativity and found a number of ways to be more environmentally sustainable in frontend build.

Fonts

One area that can be seen as part of good design is the use of typography. Besides choosing fonts that helps with branding, readability, and accessibility, we must consider their energy consumption. Fonts can be seen as a minor thing but it is an additional resource and can slow down the rendering of your site and add to overall page weight.

Custom fonts can be problematic in terms of being green in your web solution against system fonts. System fonts are more efficient since they are included by default on your users’ devices. Fonts like Arial, Courier and Times New Roman don’t require any loading time as they already exist on all devices and operating systems. If custom fonts is the way to go, we recommend to at least try to minimize the number and size of font sets: use only certain weights, range and limit characters to the ones that are actually being used on your site.

Libraries and Frameworks

Same rule for backend and is applicable here when we need to install dependency (CSS or JS libraries and frameworks) for a particular purpose. It could be much more effective to write a few lines of code inside your project instead of installing a big library which will impact the size of the bundle significantly.

Headless: SPA and REST API->GraphQL

We like an approach of developing sites as SPA with content pre-rendering which makes it possible to transfer only data between server and user. This method is more efficient and helps with web sustainability compared to full page reloading with sending the same HTML parts again and again when users goes through different pages of the site. At the same time, the site is not losing SEO score as has pre-rendered pages for crawlers.

To retrieve data from backend, we work with REST API but starting to use GraphQL as it allows to write queries to get only needed data and combine different types in one response compared to sending a few requests to different endpoints like in REST API.

We follow the common practice of minifying CSS and JavaScript files and also divide them into chunks to lazy load. This way when the users visit an article by shared link from a social network and have not visited the front page, we could skip sending these part of the files for more of an efficient means of delivery. This works pretty well in combination with our SPA approach.

Images

Nowadays images are an important part of site's content but, at the same time, it's a heavy part of the resulting page size and weight. Therefore it is equally as important that all images should be optimized.

It's a challenging task to find a good balance between size and quality. To reach this level, we use our serverless function which is calling during file upload to storage and optimizes image through NodeJS libraries. Then on frontend, we lazy load image and use different files for different screen sizes as known as responsive images.

Conclusion

We've briefly described how we build sites and think about being eco-friendly and sustainable. At the same time, we do not want to overdo it as our aim is to find a good balance between awesome design with possible cool animations and functionality that satisfies our clients' goals and needs for good performance with a reduced carbon footprint.